Executive Summary

People, Power and Technology: The Tech Workers’ View is the first in-depth research into the attitudes of the people who design and build digital technologies in the UK. It shows that workers are calling for an end to the era of moving fast and breaking things.

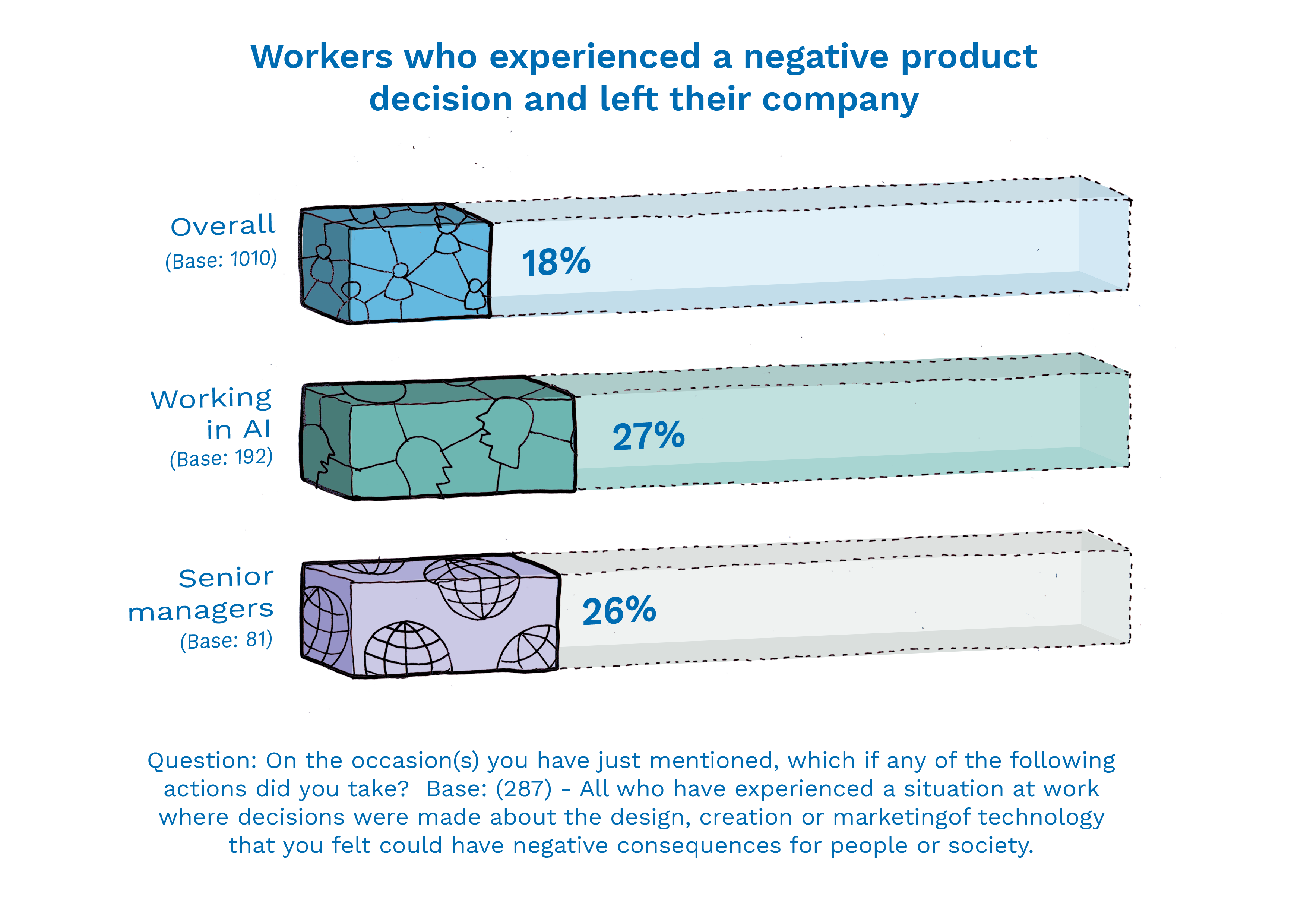

Significant numbers of highly skilled people are voting with their feet and leaving jobs they feel could have negative consequences for people and society. This is heightening the UK’s tech talent crisis and running up employers’ recruitment and retention bills. Organisations and teams that can understand and meet their teams’ demands to work responsibly will have a new competitive advantage.

While Silicon Valley CEOs have tried to reverse the “techlash” by showing their responsible credentials in the media, this research shows that workers:

- need guidance and skills to help navigate new dilemmas

- have an appetite for more responsible leadership

- want clear government regulation so they can innovate with awareness

Every technology worker that leaves a company does so at a cost of £30,000.* The cost of not addressing workers’ concerns is bad for business — especially when the market for skilled workers is so competitive.

Our research shows that tech workers believe in the power of their products to drive positive change — but they cannot achieve this without ways to raise their concerns, draw on expertise, and understand the possible outcomes of their work. Counter to the well-worn narrative that regulation and guidance kill innovation, this research shows they are now essential ingredients for talent management, retention and motivation.

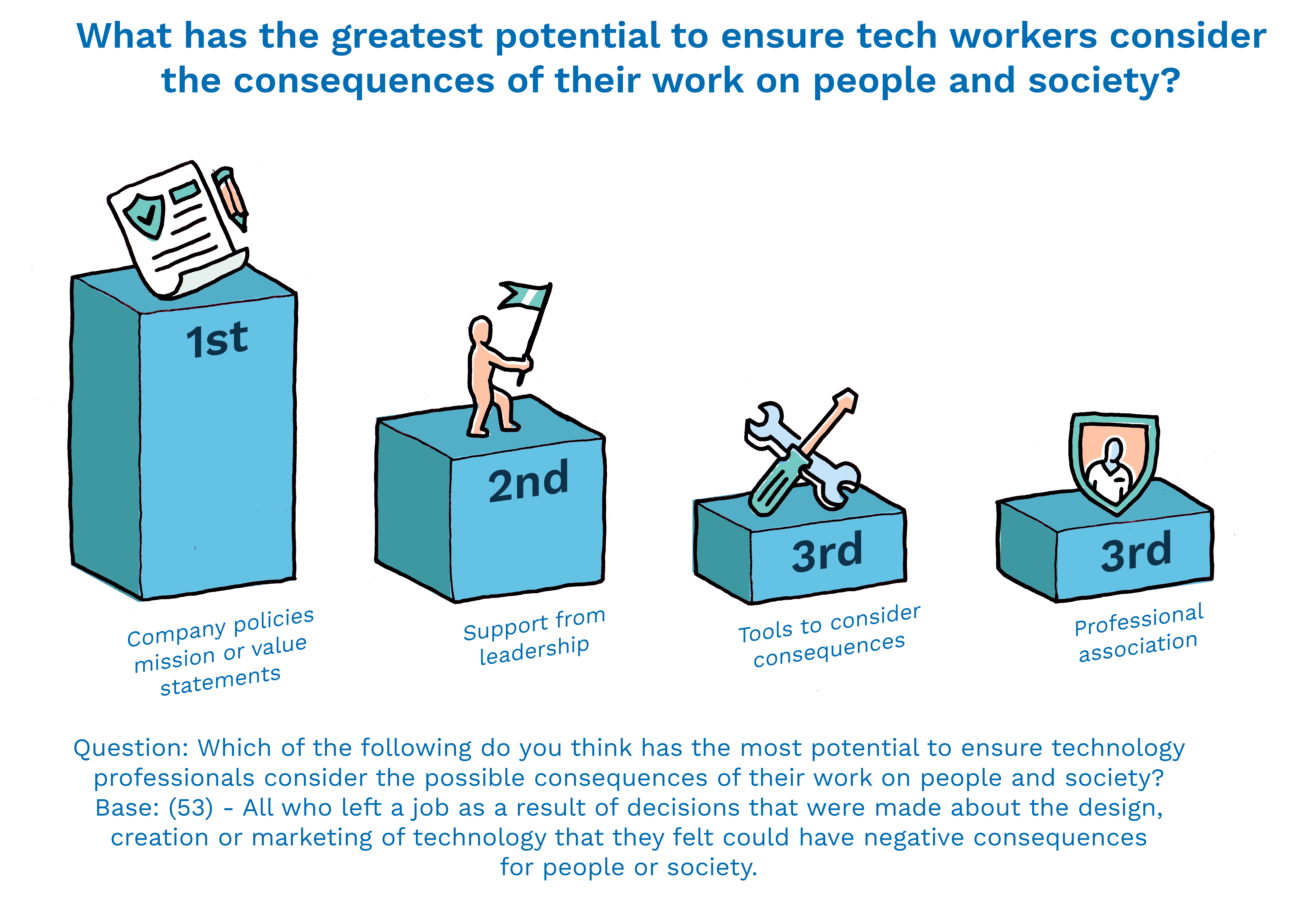

It is time for the tech industry to move beyond gestures towards ethical behaviour — rather than drafting more voluntary codes and recruiting more advisory boards, it is time to double down on responsible practice. Workers — particularly those in the field of AI — want practical guidelines so they can innovate with confidence.

*Oxford Economics (2014) ‘The Cost of Brain Drain’. http://resources.unum.co.uk/downloads/cost-brain-drain-report.pdf

Our recommendations

Businesses should:

- Implement transparent processes for staff to raise ethical and moral concerns in a supportive environment

- Invest in training and resources that help workers understand and anticipate the social impact of their work

- Use industry-wide standards and support the responsible innovation standard being developed by the BSI – 78% of workers favour such a framework

- Engage with the UK government to share best practice and support the development of technology literate policymaking and regulation

- Rethink professional development, so workers in emerging fields can draw on a wider skills and knowledge base — not just their own ingenuity and resources

Government should:

- Provide incentives for responsible innovation and embed this into its Industrial Strategy

Key Findings

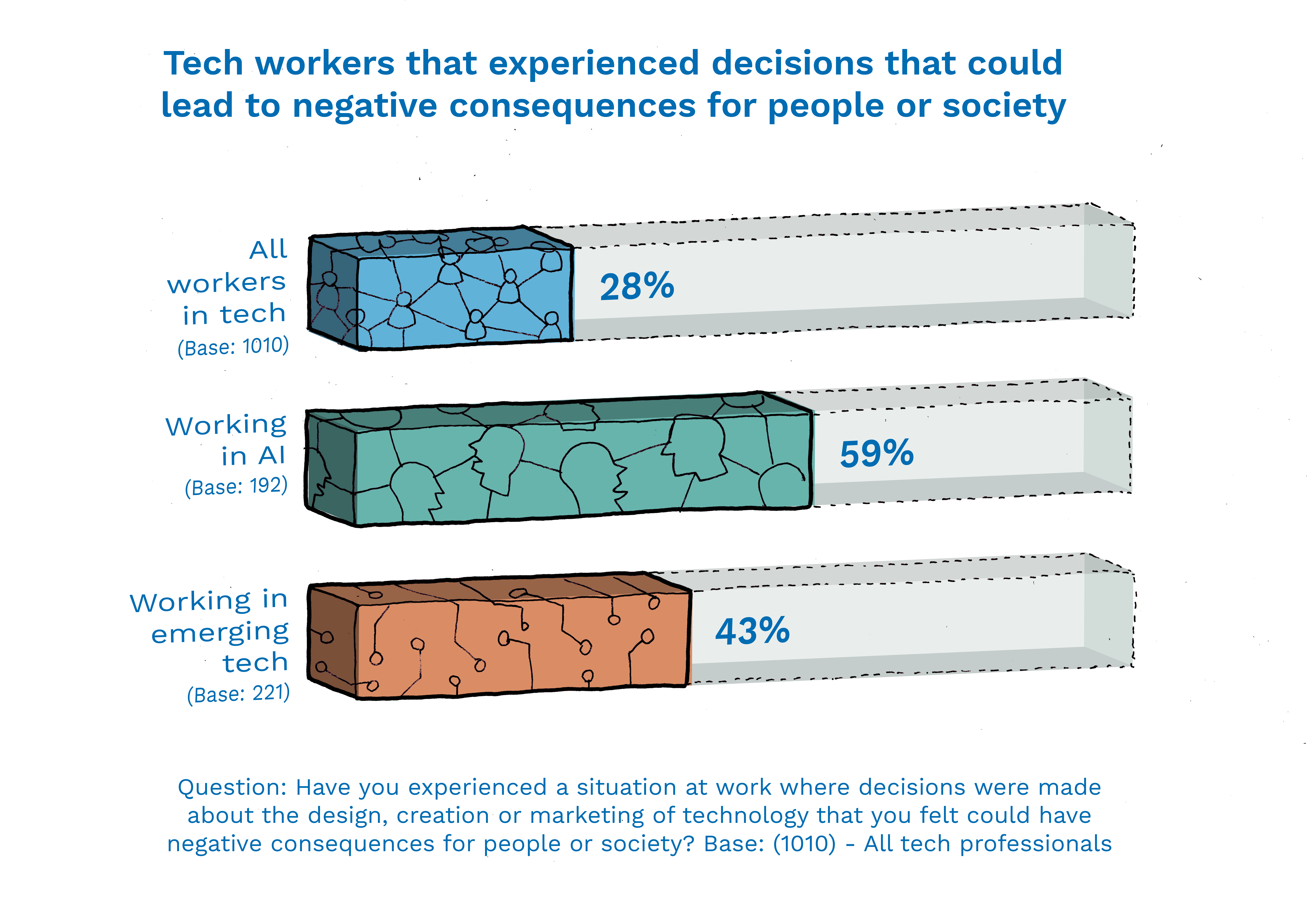

- More than a quarter (28%) of tech workers in the UK have seen decisions made about a technology that they felt could have negative consequences for people or society. Nearly one in five (18%) of those went on to leave their companies as a result.

- The potential negative consequences these workers identified include the addictiveness of technologies, the negative impact on social interaction and the potential for unemployment due to automation by technology. They also highlighted failures in safety and security and inadequate testing before product releases.

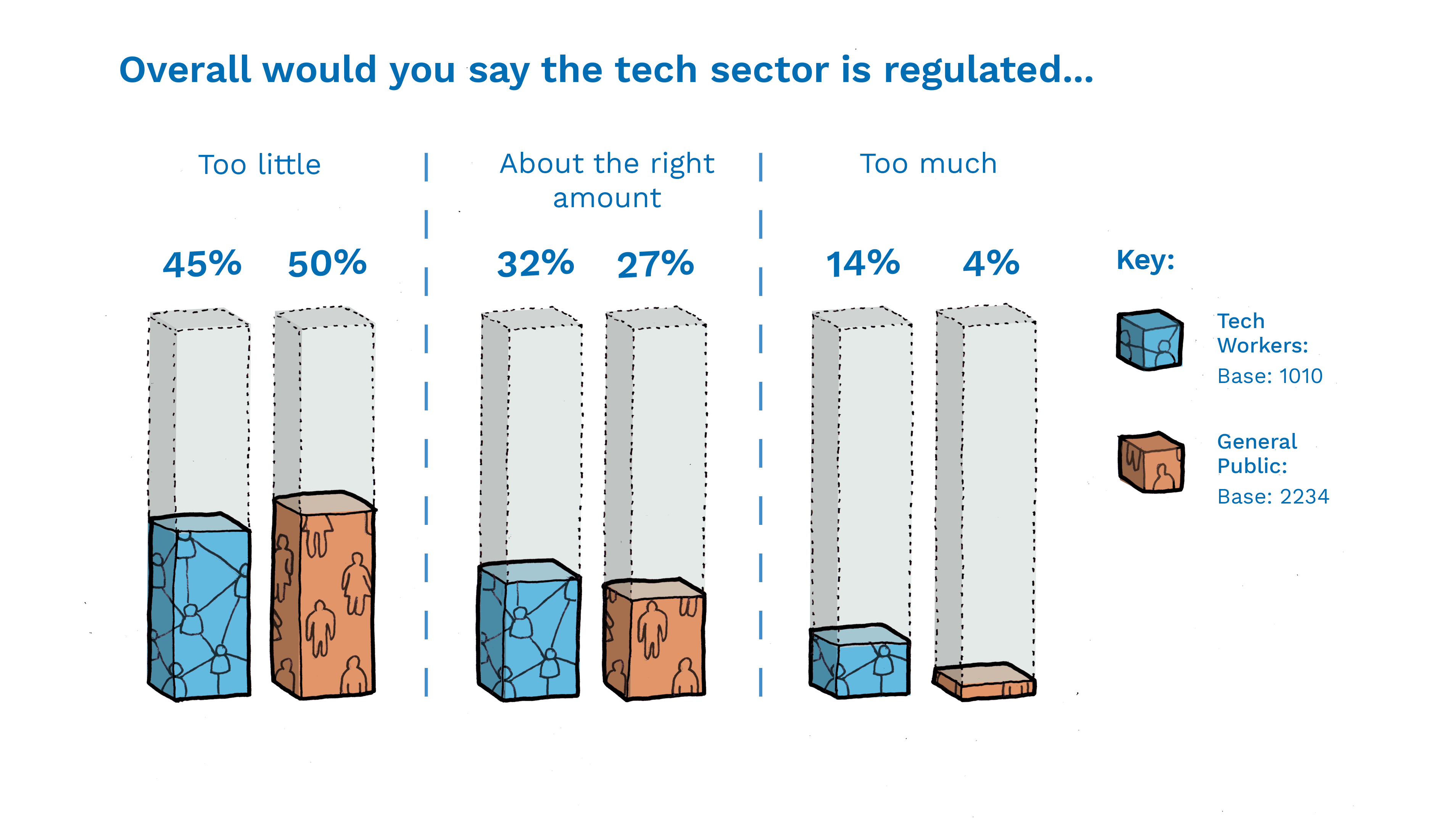

- Government regulation is the preferred mechanism among tech workers to ensure the consequences of technology for people and society are taken into account. But almost half of people in tech (45%) believe their sector is currently regulated too little.

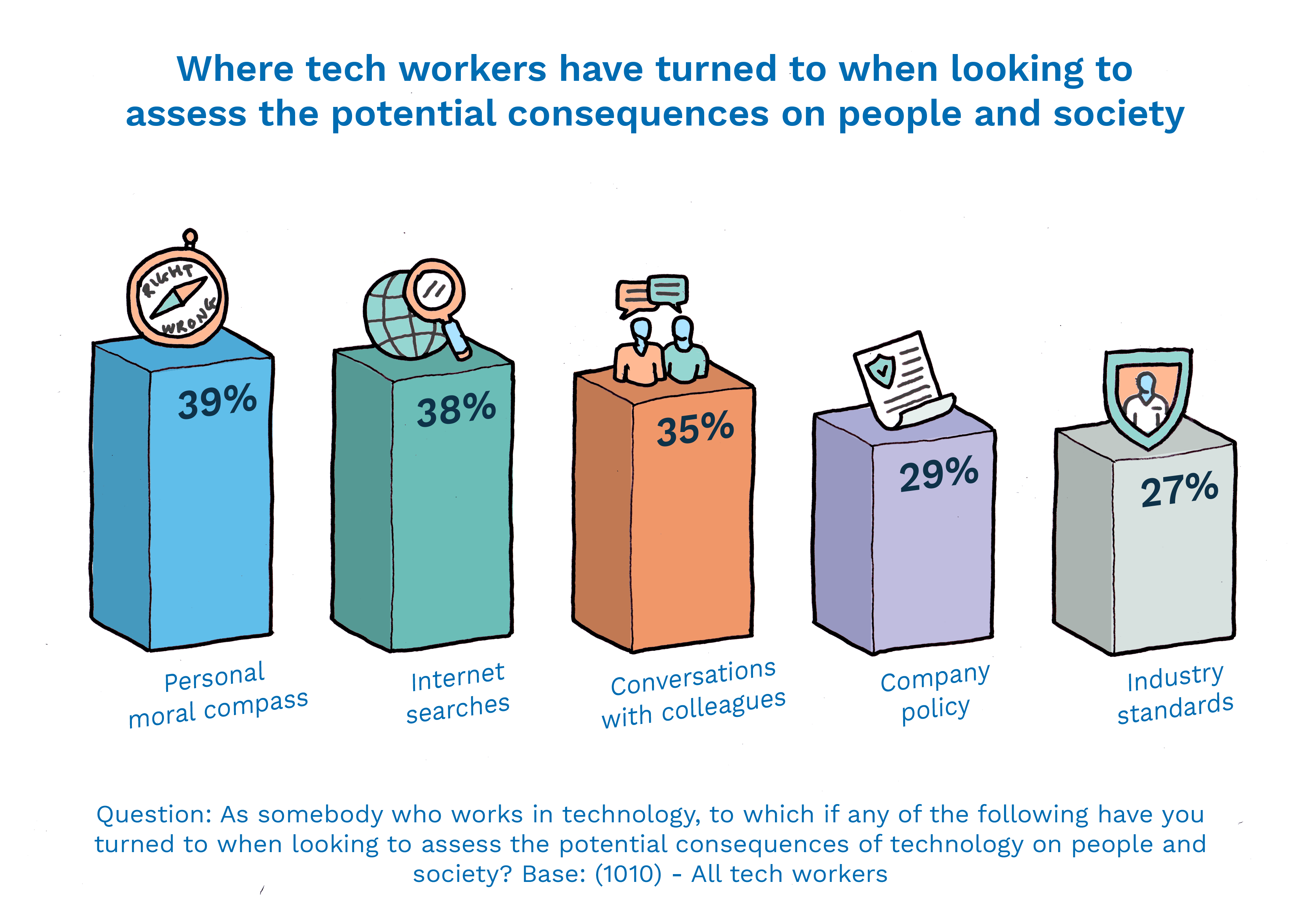

- Tech workers want more time and resources to think about the impacts of their products. Nearly two-thirds (63%) would like more opportunity to do so and three-quarters (78%) would like practical resources to help them. Currently they rely most on their personal moral compass, conversations with colleagues and internet searches to assess the potential consequences of their work.

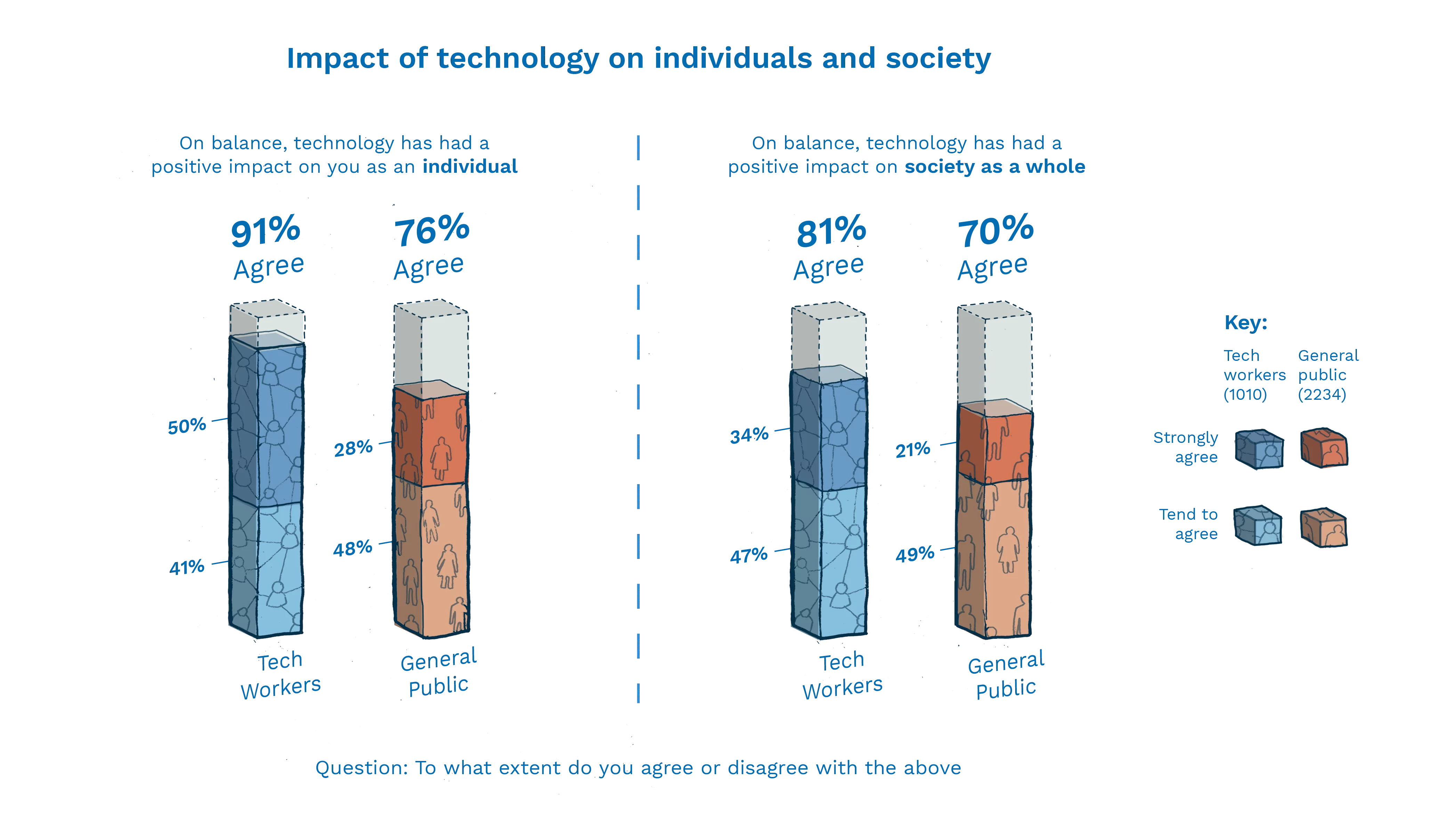

- Despite their concerns, the vast majority of tech workers believe technology is a force for good. 90% say technology has benefited them individually; 81% that it’s benefited society as a whole. Looking ahead, they’re excited by the potential of technology to address issues like climate change and transform healthcare, though they are alert to possible flipsides of such new technologies.